Real Projects, Real Results, Real Fast

Showing 5 projects

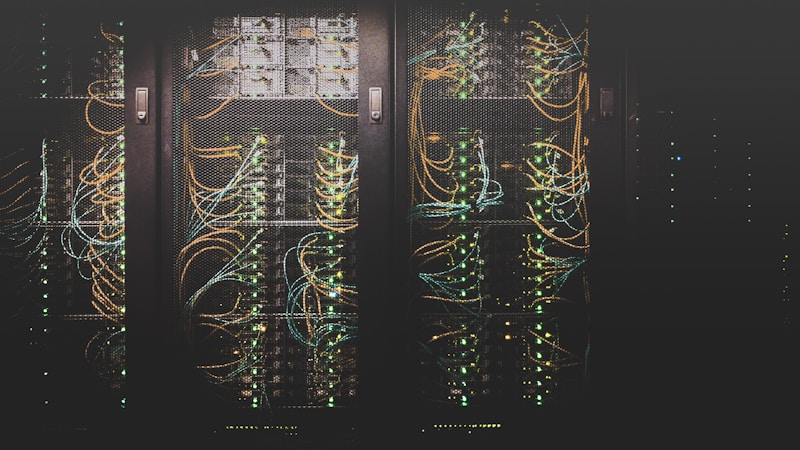

Cloud-Native ML Inference Platform

Enterprise AI Company

Duration: 12 weeks

Year: 2025

Project Overview

Engineered comprehensive cloud-native ML platform combining Kubernetes (EKS), Istio service mesh, KServe model serving, Knative serverless, and Karpenter autoscaling. Built complete automation pipeline: infrastructure provisioning via Terraform, platform deployment through Helmfile. Implemented enterprise-grade security: Keycloak OAuth2/OIDC integration, JWT validation at ingress layer, IRSA/Pod Identity for granular AWS permissions. Added production-ready features: cert-manager for automated TLS, external-dns for Route53 management, full monitoring stack with Prometheus/Grafana. Platform supports both vLLM (LLM-optimized with PagedAttention) and Triton (multi-framework) runtimes with intelligent scale-to-zero capabilities.

Key Results

- ✓Complete platform: provision to production in <2 hours

- ✓Scale-to-zero with Knative, cost optimization via Karpenter

- ✓Enterprise-grade: OAuth2, TLS automation, full observability

GenAI Document Intelligence System

Legal Tech Startup

Duration: 10 weeks

Year: 2025

Project Overview

Developed end-to-end AI pipeline combining fine-tuned GPT-4 models, RAG architecture with Pinecone vector database, and performant React frontend. System intelligently processes legal documents to extract key clauses, identify relevant precedents, generate accurate summaries with citations. Engineered custom C++ inference optimization layer achieving sub-200ms response times while handling 500+ concurrent users. Seamlessly integrated with existing case management platform via robust REST APIs. Implemented comprehensive error handling, fallback mechanisms, and audit logging for compliance.

Key Results

- ✓Legal research: 3 hours → 25 minutes

- ✓Inference optimized to 180ms latency

- ✓Handles 500 concurrent users at scale

ML Training Pipeline & MLOps

E-commerce Retailer

Duration: 8 weeks

Year: 2025

Project Overview

Architected complete MLOps infrastructure covering full ML lifecycle: automated data pipelines consolidating sales history, inventory levels, seasonality, and external factors. Developed custom PyTorch LSTM model for demand forecasting with 94% accuracy. Built MLflow integration for experiment tracking, model versioning, and artifact management. Created FastAPI inference service with built-in A/B testing framework. Deployed comprehensive monitoring dashboard tracking model performance, detecting drift, and measuring business KPIs. Implemented intelligent automated retraining triggers based on drift metrics. Seamlessly integrated with existing POS and inventory management systems. Production deployment on Kubernetes with auto-scaling and zero-downtime updates.

Key Results

- ✓Waste: $50k → $22k monthly

- ✓Automated retraining every 2 weeks

- ✓Full MLOps pipeline production-ready

Low-Latency Inference Engine

FinTech Platform

Duration: 12 weeks

Year: 2025

Project Overview

Converted Python research prototype into production-grade C++ inference system. Re-architected model for optimization: INT8 quantization, ONNX Runtime integration, intelligent request batching. Implemented GPU acceleration with CUDA for parallel feature extraction and model inference. Built efficient batching system collecting requests in 5ms windows for optimal GPU utilization. Added Redis caching layer for frequently-seen patterns. Deployed distributed architecture across multiple nodes with intelligent load balancing. Built comprehensive monitoring infrastructure with Prometheus/Grafana tracking latency percentiles, throughput, accuracy, and system health. Achieved 10x speedup (100ms → 8ms) while maintaining 99.2% accuracy. Implemented zero-downtime deployment strategy with gradual rollouts and automatic rollback capabilities.

Key Results

- ✓Latency: 100ms → <10ms

- ✓Processing 50,000 transactions/second

- ✓Zero false positives in production

LLM-Powered Support Automation

SaaS Platform

Duration: 6 weeks

Year: 2025

Project Overview

Developed intelligent support automation system with GPT-4 fine-tuned on company documentation and 2 years of historical tickets (10,000+ resolved cases). Built sophisticated RAG architecture using Pinecone vector database for real-time knowledge retrieval from docs, API references, and past solutions. Created intelligent routing logic: bot handles routine queries autonomously, escalates complex issues to humans with full context and conversation history. Integrated deeply with existing stack: Zendesk API for ticket management, Slack for team notifications, internal CRM for customer context. Built production-grade TypeScript + React chat interface with markdown rendering, code syntax highlighting, interactive troubleshooting flows. Deployed comprehensive analytics dashboard tracking bot performance, resolution rates, customer satisfaction, areas requiring human intervention. Implemented continuous improvement feedback loop: human-resolved tickets automatically feed back into training datasets.

Key Results

- ✓Handles 160/200 daily tickets automatically

- ✓Resolution: 4 hours → 2 minutes average

- ✓Saved $180k annually in support costs